Introduction

One of the biggest challenges for DevOps teams is to figure out solutions to manage all the application dependencies and technology stack across various cloud and development environments. To do so, their routines typically involve keeping the application operational no matter where it runs – typically without having to change too much about its code.

Docker enables all engineers to be efficient and reduce operational overheads so that any developer, in any development environment, can build stable and reliable applications. It is a containerization technology which is mainly used to package and distribute applications across different kinds of platforms regardless of the operating system. When you run the “docker run” command within your host, the Docker client automatically pulls the image from a Docker registry if it is not already on your host.

Docker provides people who are into technology with the ability to package and run an application in an isolated environment that is more forgiving than it was to redistribute and manage applications in the past. Because Docker containers can safely share a single OS kernel, they are entirely self-contained. You can easily share them while you work and be sure that everyone you share with gets the same container that works, in the same way, no matter what hardware they run on or what network they are connected to!

What is Docker?

A container is a lightweight, stand-alone, executable package. It is essentially a software development solution known as containers. Containers contain everything needed to run software – they are similar to virtual machines, but they can be more efficient because they use the hardware more precisely without having the overhead that comes with running a completely isolated operating system.

Confused about your next job?

Containers are a tool for developers making it easier for them to deploy their applications on Linux-based platforms without having to worry about the specific environment in which the code will run because of the platform independence that containers offer. If a developer wishes, one could even choose to host her code inside a virtual machine that uses a container consisting of Docker as computational virtualization. However, if the main purpose here is to simply deploy microservices using Docker tools, then any cloud hosting service provider’s one-click’ deployment process would be enough.

Containers are cross-platform in nature and therefore Docker is able to run across both Windows and Linux-based platforms. In fact, many developers also choose to run Docker within a virtual machine if there is a need for more isolation or the ability to simulate a hosting environment like AWS. The main benefit of containers is that it allows you to process microservice applications in a distributed architecture.

Docker containerization helps to move up the abstraction of resources from the hardware level to the OS. This offers a realization of benefits like application portability, infrastructure separation, and self-contained microservices.

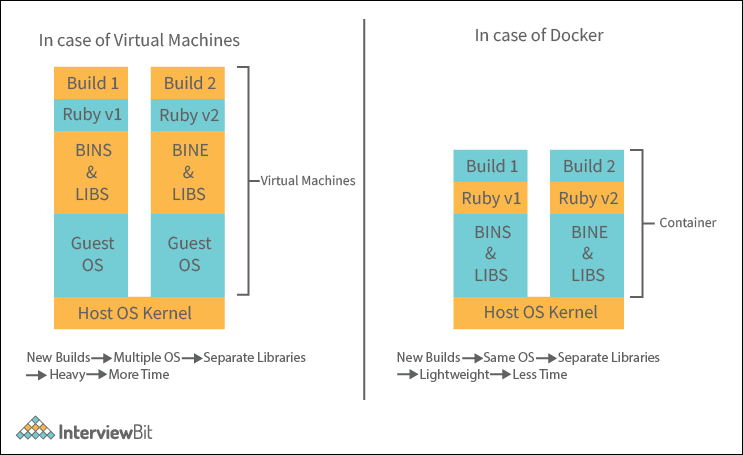

Virtual Machines Vs Docker Containers

While it used to be the case that we’d use Virtual Machines for many areas of application lifecycle management, containers are now on top of the DevOps deck. Originally, VMs were responsible for providing a foundation on which applications could be built and tested in a simulated environment. But VMs had some drawbacks, including being restricted by where they could run due to needing specific configurations and having to worry about host machine capacity beforehand. Containers solve this problem because they separate working environments from actual infrastructure by packaging applications as lightweight OS-level virtualization environments.

In contrast, Virtual Machines (VMs) abstract computing hardware by assigning a guest OS its own dedicated environment, and Containers do the same thing but instead of setting up the virtual machines on a host operating system, they run directly on top of the host operating system.

Docker Statistics & Facts

According to recent statistics, 2/3 of companies that try using Docker, adopt it. Most companies who will adopt have already done so in under 30 days of initial production usage, and almost all the remaining adopters convert within 60 days. Docker adoption has increased by 30% in the last year. PHP and Java are the main programming frameworks used in containers.

Docker’s Workflow

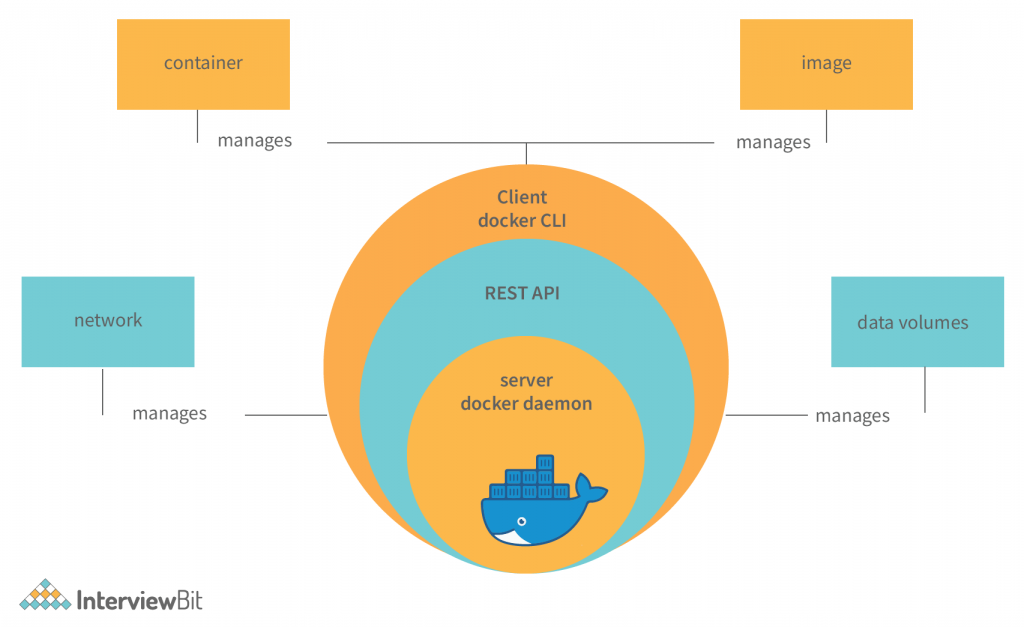

In order to give the proper insight into the docker system flow, let us first explore the components of the Docker engine and how they work together to develop, assemble, ship, and run various applications. The stack comprises:

- Docker daemon: A persistent, background process that manages running containers, images, and volumes. It handles API requests by the client, builds and runs Docker images, creates containers from those images, and allows clients to connect with those containers using a namespace for volume storage.

- Docker Engine REST API: The Docker API allows the Docker Engine to be controlled by other applications. They can use it to query information about containers or images, manage or upload images, or perform actions such as creating new containers. This function is accessed through a web service called HTTP client.

- Docker CLI: A command-line tool used for interacting with the Docker daemon. This is one of the key reasons why people like to use Docker in the developer environment.

From the beginning, the Docker client talks to the Docker daemon, which handles a lot of the heavy lifting in the background. Ultimately, both the Docker client and daemon can run on the same system; however, we can also connect a Docker client to a remote Docker daemon by using an Advanced REST API that works over Unix sockets or other common network protocols.

Docker Architecture

The architecture of Docker consists of Client, Registry, Host, and Storage components. The roles and functions of each are explained below.

1. Docker’s Client

The Docker client can interact with multiple daemons through a host, which can stay the same or change over time. The Docker client can also generate a command-line interface (CLI) to send commands and interact with the daemon. The three main things that can be controlled and managed are Docker build, Docker pulls, and Docker run.

2. Docker Host

A Docker host helps to execute and run container-based applications. It helps manage things like images, containers, networks, and storage volumes. The Docker daemon being a crucial component, performs essential container-running functions and receives commands from either the Docker client or other daemons to get its work done.

3. Docker Objects

1. Images

Images are nothing but containers that can run applications. They also contain metadata that explains the capabilities of the container, its dependencies, and all the different components it needs to function, like resources. Images are used to store and ship applications. A basic image can be used on its own but can also be customized for a few reasons including adding new elements or extending its capabilities.

You can share your private image with other employees within your company using a private registry, or you can share the image globally using a public registry like Docker Hub. The benefits of the container are substantial for businesses because it radically simplifies collaboration between companies and even organizations– something that had previously been nearly impossible before!

2. Containers

Containers are sort of like mini environments in which you run applications. And the great thing about containers is that they contain everything needed for every application to do its job in an isolated environment. The only things that a container can access are the ones that are provided. So, if it’s an image, then images would be the only sort of resource that a container would have access to when being run by itself.

Containers are defined by the image and any additional configuration options provided during the start of the container, including and not limited to network connections and storage options. You can also create a new image based on the current state of a container. Like how containers are much more efficient than virtual machine images, they are spun up within seconds and give you much better server density.

3. Networks

Docker networking is a passage of communication among all the isolated containers. There are mainly five network drivers in docker:

- Bridge: It is the default network for containers that are not able to communicate with the outside world. You use this network when your application is running on standalone containers, i.e., multiple containers in a network that only lets them communicate with each other and not the outside world.

- Host: This driver allows for seamless integration of Docker with your resources running on your local machine. It relies on the native network capabilities of your machine to provide low-level IP tunnelling and data link layer encryption between the Docker apps running on different endpoints.

- Overlay network: This is a type of software-defined networking technology that allows containers to communicate with other containers. To connect it to an external host, first, you must create a virtual bridge on one host and then create an overlay network. You’ll also need to configure the overlay network and grant access from one side to the other. A “none” type driver usually means that the networking is disconnected.

- macvlan: In order to assign an address for containers and make them similar to that of physical devices, you can use the macvlan driver. What makes this unique is that it routes traffic between containers through their associated MAC addresses rather than IPs. Use this network when you want the containers to look like physical devices, for example during migration of a VM setup.

4. Storage

When it comes to securely storing data, you have a lot of options. For example, you can store data inside the writable layer of a container, and it will work with storage drivers. The disadvantage of this system could be risky since if you turn off or stop the container then you will lose your data unless it is committed somewhere else. With Docker containers, you have four options when it comes to persistent storage.

- Data volumes: They provide the ability to create persistent storage, with the ability to rename volumes, list volumes, and list the container that is associated with the volume. Data Volumes are placed on a data store outside the container’s copy-on-right mechanism – either in S3 or Azure- and this is what allows them to be so efficient.

- Volume Container: A Volume Container is an alternative approach wherein a dedicated container hosts a volume, and that volume can be mounted or symlinked to other containers. In this approach, the volume container is independent of the application container and therefore you can share it across multiple containers.

- Directory Mounts: A third option is to mount a host’s local directory into the container. In the previous cases, the volumes would have to be within the Docker volumes folder, whereas for directory mounts any directory on the Host machine can be used as a source for the volume.

- Storage Plugins: Storage plugins provide Docker with the ability to connect to external storage sources. These plugins allow Docker to work with a storage array or appliance, mapping the host’s drive to an external source. One example of this is a plugin that allows you to use GlusterFS storage from your Docker install and map it to a location that can be accessed easily.

4. Docker’s Registry

Docker registries are storage facilities or services that allow you to store and retrieve images as required. For example, registries are made up of Docker repositories that store your images under one roof (or at least in the same house!). Public Registries include two main components: Docker Hub and Docker Cloud. Private Registries are also fairly common among organizations. The most commonly used commands when working with these storage spaces include docker push, docker pull, and docker run.

Docker Use Cases

- Enabling Continuous Delivery (CD): Docker makes continuous delivery possible. Docker images can be tagged, which means that each image will be unique to each change, making implementing continuous delivery that much easier. When it comes to continuous delivery, you have two options: blue/green deployment (maintaining the old system while getting the new framework up) or Phoenix deployment (where you rebuild the system from scratch on every release).

- Reducing Debugging Overhead: Docker is essentially a solution to the problem developers and engineers face in the complete unification of development, staging, and production environments without having to develop complex configurations when writing an application. This allows for an increase in productivity from just about any engineer. It also allows for easier debugging of problems by travelling back down the stack instead of trying to troubleshoot an issue using several different tools that may or may not produce the same result across many different monitoring tools.

- Enabling Full-Stack Productivity When Offline: You can deploy your application in containers by bundling it. This saves time and resources since your applications are portable and work offline if you connect them to your local host.

- Modelling Networks: You can spin up hundreds, even thousands, of containers on one host in a few minutes. With a pay-as-you-go approach, you will be able to model any kind of scenario and replicate this scenario several times in just as many copies on different hosts at no extra charge. This approach enables us to test real work use cases and change the environment depending on our current purposes (predictive analysis).

- Microservices Architecture: To keep pace with the ever-changing architecture requirements and the challenges of building large-scale applications, a paradigm shift from monolithic to modular is necessary. Many companies have changed their approach when designing their applications by either making use of SOA (service-oriented architecture) or microservice architecture services. In a microservice architecture, each service is highly autonomous and it can be scaled independently as well as deployed separately without interrupting the overall running services. By using Docker containers, these complex distributed systems can be built and deployed faster than other traditional methods.

- Prototyping Software: With Docker Compose, spinning up and deploying a container can take effect with only the click of a button. This means you will be able to test new features extremely quickly without having to worry about affecting the whole application.

- Packaging Software: Using Docker, an application can be packaged and shipped in a fast, easy, and reliable way. As Docker is lightweight, it can be deployed on any machine of your choice, irrespective of the flavour of Linux that has been installed on it. For example, Java without the need for a JVM to run.

Features of Docker

- Faster and Easier Configuration: Docker containers, or “Docker images,” are a specific type of operating system test environment that is utilized to deploy and test applications in a drastically reduced amount of time and with fewer resources than if an entire database infrastructure had to be installed on a computer for it. Docker containers are the perfect solution for almost any organization that wants to protect their physical database infrastructure from outside intrusions, do more with less when it comes down to hardware and software, protect their employees from having to worry about virtual servers, or deploy applications anywhere without the need for additional configuration.

- Application isolation: Docker provides containers that aid developers in creating applications in an isolated environment. This independence allows any application to be created and run in a container, regardless of its programming language or configuration required.

- Increase in productivity: These containers are portable, self-contained, and they include an isolated disk volume so you can transport highly protected information without losing sight of what’s inside as the container is developed over time and deployed to various environments.

- Swarm: Swarm is a clustering and scheduling tool for Docker containers. At the front end, it uses the Docker API, which gives us a means of controlling it with various tools. It is composed of many engines that can both be self-organizing or pluggable depending on what requirements they’re trying to meet at any given point in time. Swarm enables you to use a variety of backends.

- Services: Services are an abstraction layer to make it easier for users to access the various orchestration systems. It serves as a gateway from higher-level formats such as OpenStack, NFV, or management software into the Swarm API. Each service record lists one instance of a container that should be running, and Swarm schedules them across the nodes.

- Security Management: Open-source software saves sensitive information into the cloud and allows people to give access to certain things such as open-source software platforms, like the one you may use that can create secret passes, etc.

- Rapid scaling of Systems: Containers are computer program components that package an application with all of its dependencies into a single software bundle. Containers offer many benefits to service providers by allowing them to combine multiple services onto a single computing platform. This works because containers don’t rely on their host configuration, rather they only rely on their contents and thus will run correctly regardless of what operating system or kernel they’re running on, which means that if you want to share say half of a server’s power across two applications while the other half is reserved for another, your container won’t mind where it runs as long as it meets the terms of the service which contains it. You’ll just need to ensure that there is enough RAM available.

- Better Software Delivery: The containers that make up the best software delivery system as it is one of the best and safest software delivery systems available at this moment. These containers are portable, self-contained, and they include an isolated disk volume to transport highly protected information without losing sight of what’s inside as the container is developed over time and deployed to various environments.

- Software-defined networking: Docker being Software Defined Networking (SDN) has enabled the toughest of jobs such as networking to become a breeze! With the CLI (Command Line Interface) and Engine, you are able to define isolated networks for containers. In addition to this, designers and engineers can shape intricate network topologies, plus easily define them in configuration files too! Since applications’ containers can run on such an isolated virtual network, with curated access points, it comes as no surprise then that these benefits could lead to supported security measures and much more.

- Has the Ability to Reduce the Size: The size of an operating system is directly proportional to the number of applications installed in the system. Being a comparatively smaller footprint, containers will help to reduce the number of applications, and hence, the OS can be comparatively smaller.

Benefits of Using Docker

In the world of technology, it can be very hard to keep up with all the new technologies emerging weekly! But, when a product like Docker gets added to your list of applications, there is one thing you can almost guarantee: it has changed the way you build and deploy software. Let us cover the top advantages of docker to better understand it.

- Return on Investment and Cost Savings: The biggest driver of most management decisions when selecting a new product is cost. Large, established companies need to be able to generate steady revenue over the long term, but selecting software solutions that reduce costs and raise profits will always be the priority for any company. Docker is doing a good job, of making it easier and cheaper to run applications. Each application can use fewer resources than before, because of the resource savings that come with Docker. This makes organizations more efficient: they don’t need as many servers or as many people on staff to maintain them; each server takes care of more work. These developments help make engineering teams smaller and more effective at delivering value for end-users.

- Standardization and Productivity: OS containers help to elevate consistency across all your development and release cycles. With OS containers, you can standardize a specific environment for yourself, or your entire team at work. Engineers are more likely to catch and fix bugs in the application this way. It will take less time than it would otherwise, leaving them looking like heroes as they’ll be able to spend more time adding new features! Docker containers make it easy for you to roll back change. For example, if you upgrade a part of your app and something breaks in other parts of your infrastructure, Docker will help you fix that by rolling back the container. This process speeds things up extensively because instead of going through all the processes to patch up the problem, you’ll have to go through just a few processes only!

- CI Efficiency: By using Docker, you can build a container image once and then use it across every step of the deployment process, which will enable you to separate non-dependent steps and run them in parallel. This speeds up the time from build to production significantly.

- Compatibility and Maintainability: By using a containerization platform, Docker allows you to build more reliable software by ensuring that your project’s stack environment runs in a repeatable, predictable, and identical fashion across the network. This helps avoid unforeseen issues and ensures your project is easier to maintain, allowing your IT infrastructure to be more stable and reliable, especially with consistency across testing and development environments.

- Simplicity and Faster Configurations: Docker simplifies matters in your development cycle. In the past, certain configuration details would have to be set on the client’s computer, and mirroring those settings back to the server is tricky. Docker doesn’t have that problem because it works no matter where or how you use it. Consistency across environments is realized by ensuring there are no ties between code and environment.

- Rapid Deployment: Docker containers eliminate deployment time from hours to minutes. This is possible because it doesn’t run an OS; it instead has each container running its OS and process with the OS being lightweight, plus it also creates a type of snapshot for each of your data sets so you have backups no matter if someone deletes a necessary folder by mistake.

- Continuous Deployment and Testing: It can be problematic out there, particularly if you’re wanting to develop your applications. But since Docker agnostic automation when used side by side with AWS Lambda, you’re using a stack that’s inevitably on the rise and as such completely future-proof. In return, this unlocks more opportunities by default because it truly allows testing in one location and then deploying in another without any losses or discrepancies whatsoever thanks to the volumes system built into Docker Datacenter. One of the other big advantages to implementing Docker into your development process is its flexibility. If you need to make upgrades to existing containers and deploy them on new servers during a project’s release cycle, then Docker offers no-fuss changes. You can easily change your containers and make sure they will integrate seamlessly.

- Multi-Cloud Platforms: One of Docker’s greatest benefits is its ability to be used on so many different platforms. Over the last few years, major cloud computing providers including Amazon Web Services (AWS) and Google Compute Platform (GCP) have embraced Docker’s availability and have added individual support. This means that companies or people can “containerize” their apps and take them to any platform with ease. When it comes to containers, one can easily transition from Amazon EC2 instance environments to VirtualBox, allowing for the same consistency and functionality. Containers also work well with other providers like Microsoft Azure, as well as OpenStack, and can be utilized by configuration managers like Chef, Puppet, Ansible, etc.

- Isolation: Docker runs applications in containers, which are isolated. You can have as many or as few containers as you like for different applications, but each is kept separate and independent of the other. If you have your production app on one container, then it will not affect your development environment no matter how much they may be scripted together. Thus, you can keep them separate and cleanly apply fixes to one without affecting the other. This all adds up to less chance of error on production machines but maintain a controlled development environment if you want to test something quickly and patch it promptly without it affecting your main system apart from the access route needed. Docker ensures that each application only uses the exact resources they’re allotted. A single web app can’t use all of your available resources because those resources are reserved for other applications, leaving those other applications without the full range of resources they need to perform at an optimal level.

- Security: It’s always a good idea to understand security best practices even when it comes down to managing applications that our code runs on. One way of ensuring the integrity of your data is by implementing security policies, especially if your application is going to process any sort of data that hasn’t been protected in a secure manner. If you’re originating from a position where you have deployed code that runs with Docker containers, it’s ideal for containers themselves to also be secured in some form or another because what we want is for the end-user experience to be as seamless and trouble-free as possible.

Conclusion:

Running applications in containers instead of virtual machines is gaining momentum due to the popularity and impeccable value that Docker offers. Technology is considered to be one of the fastest-growing in the recent history of the software industry. At its heart lies Docker, a platform that allows users to easily pack, distribute, and manage applications within containers. In other words, it is an open-source project that automates the deployment of applications inside software containers by offering provisioning with simple command-line interface tools.

Takeaway

Docker is a platform that allows users to easily pack, distribute and manage applications within containers. It is an open-source project that automatically deploys applications inside containers.