Kubernetes is a software framework for building and operating containerized applications. Kubernetes is designed to be easy to use and to give developers the power to control and scale their applications. Kubernetes is an open source project that is being developed by Google. Kubernetes is a software framework for building and operating containerized applications. Kubernetes is designed to be easy to use and to give developers the power to control and scale their applications. Kubernetes is an open source project that is being developed by Google. Kubernetes is a software framework for building and operating containerized applications. Kubernetes is designed to be easy to use and to give developers the power to control and scale their applications. Kubernetes is an open source project that is being developed by Google. Kubernetes is a software framework for building and operating containerized applications. Kubernetes is designed to be easy to use and to give developers the power to control and scale their applications. Kubernetes is an open source project that is being developed by Google. Kubernetes is a software framework for building and operating containerized applications. Kubernetes is designed to be easy to use and to give developers the power to control and scale their applications.

What is Kubernetes Architecture?

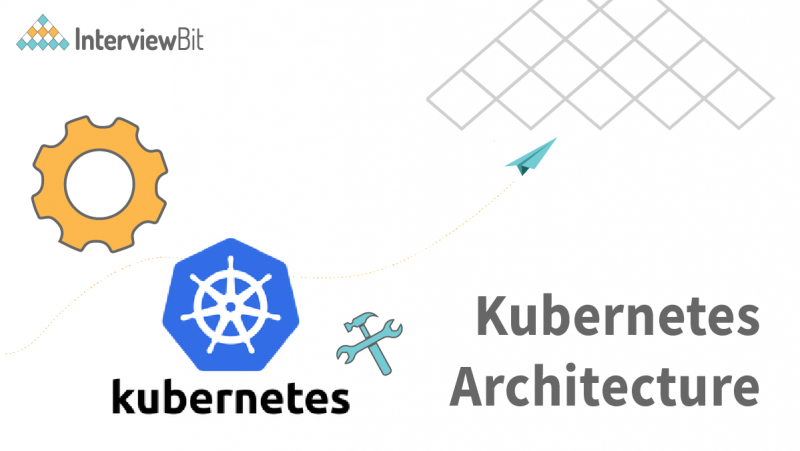

Kubernetes contains a loosely coupled mechanism for service discovery across clusters. Kubernetes clusters consist of one or more control planes and one or more compute nodes. The control plane oversees the overall cluster, provides an API, and controls compute node scheduling. Each compute node in a Kubernetes cluster is responsible for running a container runtime like Docker and an agent, kubelet, that communicates with the control plane. VMs may be bare metal servers or cloud-based virtual machines (VMs). Kubernetes bare metal servers may be used for development and production, or on-premises virtual machines may be used for development. Cloud-based VMs are only accessible through the kubelet.

The following key components comprise environments running Kubernetes:

Confused about your next job?

- The Kubernetes control plane also manages the lifecycle of applications running on a Kubernetes cluster. It provides tools for updating and monitoring a Kubernetes cluster, and for troubleshooting the health of clusters and applications. In addition, the control plane provides an administrative interface for accessing detailed information about a Kubernetes cluster and its objects.

- The kubelet contains a copy of the cluster’s state so that it can be restarted if the controller is rebooted. It also receives data from the pods and distributes that data to the other nodes. If a pod is deleted from the cluster, the kubelet automatically removes the pod from the system and notifies the controller to recreate it. The kubelet can also be used to spin up nodes as part of a private cloud. It is important to understand how a kubernetes data plane works so that you can properly architect your infrastructure and work with it in an efficient way.

- Pods can be thought of as a group of containers running on a single host that share a common code base. For example, let’s say you have a Shopify application that provides online shopping. Each of your customers can shop from their own individual application. In this scenario, you could place the code for each customer’s application within their own pod. Kubernetes divides pods into workers. Pods in a worker are grouped together for high-level tasks such as scheduling or managing security such that they are not vulnerable to single points of failure. Kubernetes resource controls are used to manage resources such as CPU, memory, and I/O capacity within a pod.

- PV is an extensible technology that allows you to create persistent volumes on any Kubernetes platform or in any cloud environment. It is not a dedicated Kubernetes technology, but a set of best practices and tools that work together. With the right combination of tools and practices, you can build efficient, high-performing, and easy-to-scale systems with persistent volumes.

Kubernetes Control Plane

A Kubernetes cluster’s controller plane is the control plane for the cluster. It includes the following components:

- The Kubernetes API server exposes the same endpoints as the master, as well as cluster and resource information. It can also be used to easily deploy and manage applications from the same codebase across different clusters and environments. The Kubernetes API server can be configured to update data in real time based on changes to the cluster or environment. It can also be used to monitor clusters and environments for failed or unhealthy pod health and automatically redeploy and restart unhealthy pods.

- Table data is stored in etcd and is accessible via the API server. The data is updated whenever the cluster receives new data from external sources. Each node in the cluster has its own copy of the etcd data, so if a node goes down, the other nodes can still read and write to etcd, and the data is still up-to-date on all the nodes in the cluster. When you query etcd at a high level, you are actually querying the API server. This makes it easy to build scalable and fault-tolerant applications that query etcd and other high-level storage systems to get a full view of the state of the cluster.

- This component is typically used in cThe cluster administrator then appoints another Node Controller to take over the failed node’s role, and the process is repeated until the node is deemed healthy enough to be removed from the monitoring list. conjunction with the Server component. When a new server is created, this component allocates that server to the appropriate workload. It also enforces policies such as CPU and memory limits, determines which master host to join for load balancing, and reports available resources to managers. It is important to note that this component does not create a Pod. It only allocates a new Pod to the node.

- The kube-controller-manager binary is what makes up a Kubernetes cluster’s multiple controller functions.

- A replication controller ensures that the correct numbers of pods are created for each replicated pod in the cluster.

- The cluster administrator then appoints another Node Controller to take over the failed node’s role, and the process is repeated until the node is deemed healthy enough to be removed from the monitoring list.

- The Endpoints object contains a collection of services and pods that the controller interacts with. The Endpoints object is a base class for all of the various higher-level objects that a controller interacts with. For example, in hosted environments, the Endpoints object is the same as the underlying infrastructure.

- A service account is a standard account that grants access to a service, but is configured to expire after a set period of time. Default accounts are a regular account that has no expiry and can be used by any application in the same namespace. These are useful for testing the service. When the service account is configured for expiration, it is important to test the account with a limited set of requests. For example, if you are testing a billing application, you can create a billing application with a small set of endpoints and test that the account works as expected when billed.

- The cloud controller manager is responsible for: Manage cloud resources such as storage, compute, and data analytics. Communicate with the cloud provider and coordinate access to cloud resources among the cloud controllers. Ensure data integrity and compliance with cloud service standards. Implement anti-spam and anti-virus software on the cloud servers. Confirm that all cloud controls are operational and available before you start syncing data and resources.

- The status of a cloud-based node can be determined by CloudTrail or other metrics provided by the cloud-based node. This can be used to determine if a cloud-based node has been deleted or not.

- Route controllers are the heart of cloud computing. When your application needs to connect to other cloud resources, such as databases,ular mechanisms are provided to establish the connection. For example, a cloud provider might provide APIs for creating and retrieving data items from its data store. In this case, your application would establish a data connection using the cloud provider’s APIs. Route controllers enable you to define the set of endpoints your application will connect to. After setting up the connection, your application can use the route controller to route requests to the right destination. Route controllers are a critical part of the overall cloud computing experience, and they are a core component of any cloud provider’s SDK.

- A cloud provider’s service controller handles the cloud provider’s load balancers.

Kubernetes Cluster Architecture

As containers are deployed and updated with new software, the control plane makes sure to push those changes to all the nodes. The node also receives these changes from the control plane and makes sure to push them to the cluster. The root cause of any issues with the control plane is usually something that the control plane has to work around. This might be a bug in the container runtime engine or a bug in the container networking driver. By running on a cluster of nodes, the control plane is much more distributed and less likely to cause issues. This also means that the control plane is much easier to scale up and down. It’s also important to understand that nodes do not have to run the same container runtime engine or container networking driver. This is entirely up to the application’s needs.

The following are the main components of a Kubernetes cluster:

- Kubernetes can be used to manage large numbers of VMs, which are programmed to run specific tasks, such as web server hosting, email server hosting, or content delivery network (CDN) serving. Each of these tasks may require different levels of processing power, memory, and storage. Virtual machines can be programmed to work in parallel to meet these requirements. Pods are the building blocks of a Kubernetes cluster. Pods are the building blocks of a Kubernetes cluster. Each pod contains one or more application containers, which are the programs that run on a Kubernetes cluster.

- The container runtime engine runs and manages the lifecycle of containers on each compute node in the cluster. For example, it may run the container runtime on each compute node in the cluster that manages the load balancers that forward containers to the desired destination. It may also run the container runtime on each compute node in the cluster that backs up the containers so that they are available should the compute node go down.

- The kubelet is an essential part of a modern containerized application, managing the process of monitoring and restarting containers when needed. This helps save resources and ensure containers are running when needed, greatly reducing the need to manually update and redeploy containers in the cloud. It also helps prevent container starvation by restarting containers that fail or stop receiving new resources. The kubelet is a critical piece of the container puzzle and must be able to communicate with the control plane to ensure containers are healthy and running. The best way to ensure communication between the kubelet and the control plane is to use the Kubernetes API server.

- Kube-proxy can also be thought of as a network gateway, the router for your Kubernetes network. It serves as the bridge for other clusters’ traffic, filtering and routing traffic for external parties. The routing engine behind the kube-proxy is responsible for determining the appropriate routing algorithm to use to transmit traffic from node to node. It may use a static routing table, determine the best route based on network metrics, or dynamically generate a route based on the availability of resources (e.g. CPU or memory) at each node. The kube-proxy also routes traffic for all service endpoints. This includes the load balancer, which forwards traffic for all externally facing services to the same controller as traffic for internal cluster services. For example, if there is a need to load balance between a load balancer on one cluster and an internal service on another, the load balancer is configured with a static route to the internal service.

- Pods are the basic unit of the system, and are responsible for handling requests, scheduling tasks, and receiving data from the outside world. In order for a pod to do anything, it must have running stateful applications that can take input from the pod and produce output. Kubernetes pods are stateful, which means they have a variety of properties that determine how they function. These properties are collectively known as the pod state. The most important property of a pod is its state, which determines how the pod behaves in response to external behavior. The pod state can determine how the pod interacts with the external world and how it responds to requests from the external world.

Kubernetes Architecture Best Practices

Here are some best practices for architecting effective Kubernetes clusters, according to Gartner:

- It is recommended to keep all versions of Kubernetes in sync, however, if there is a critical update, it is always a good idea to upgrade your cluster.

- You can start early by teaching the developers how to identify and solve issues faster. You can also start early by identifying the bottlenecks in your software development process and training your team to work better together.

- Standardizing the tools and vendor ecosystem across an entire organization is a key factor in achieving success with any cloud-based application. Standardizing the governance of an entire organization, from vendors to employees, from the top down, will create a culture of compliance and efficiency.

- Scanning code for issues and uploading images to issue tracking systems is only the first step in making sure code quality is up to standards. Once you’ve collected a large enough volume of code to be efficient with, run a code quality check.

- Implement access control lists (ACLs) to control which resources can be accessed by whom.

- Control who can access the container’s network, such as setting up a firewall. An insecure network can be used to allow remote management by anyone with a privileged ssh connection. Scanning and limiting access to malicious software can help secure your container network.

- While larger images may be faster to build, they also take up more disk space, bandwidth, and CPU resources. Therefore, it’s best to choose images that are as small as possible while still getting the desired performance.

- It’s important to test your containers as much as possible to identify and correct any issues that may arise. To do this, you can use tools like rspec or capnprocs to test your code before it’s deployed to production.

- Verbosity can be a good thing though, like when describing pain points or areas of functionality that might not be immediately relevant to the eyes of developers using the main product. In general, though, it’s a good idea to keep verbosity to a minimum.

- Instead, find the most important functions and group them together as a single, logical component. This helps to keep the application’s overall complexity low by consolidating functions into a single logical unit. You can also group related functions into a logical package: one logical package per logical business unit.

- There are many CI/CD tools that can help you with this. If you’re using an automated build process, you can also benefit from using a CI/CD pipeline that can execute code coverage reports. These reports can help you identify areas of code coverage that need to be improved, and they can also be used to identify areas of concern or risk in your application.

- Manage the lifecycle of pods by using the probe injection feature and the ready/liveness probe configuration options. The ready/liveness configuration option is used to manage the state of a pod before it receives requests from external services. This helps in determining when to fire an HTTP request to a URL provided by a service provider.

Conclusion

Kubernetes is a system for automating and deploying containerized applications. It is designed to be easy to set up and maintain, while still providing a high level of control and flexibility. Kubernetes is an open-source platform that can be used to run both private and public clouds. It is designed to work well with a wide variety of container-based applications, including Docker containers, Mesos/YARN clusters, and Kubernetes clusters. Kubernetes allows you to easily deploy and manage your applications using a single, unified interface. It also provides a number of built-in services, such as resource management, monitoring, and logging. Kubernetes is designed to be easy to use and extend. It also provides a number of built-in APIs that allow you to build custom components that extend the functionality of the platform. Kubernetes has been widely adopted as the de facto standard for container orchestration platforms.